Governing Autonomy in the Age of Intelligent Agency

By Dr Luke Soon

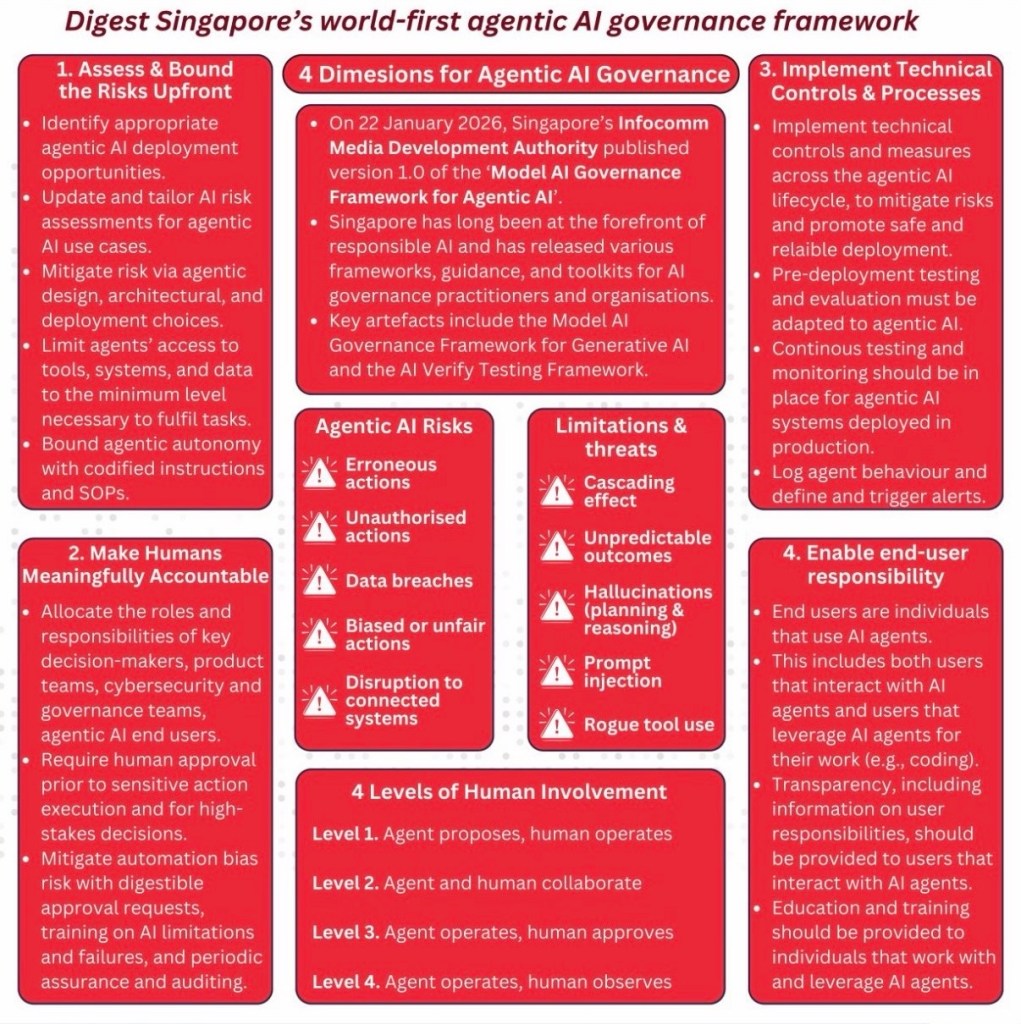

On 22 January 2026, Singapore’s Infocomm Media Development Authority (IMDA) released Version 1.0 of the Model AI Governance Framework for Agentic AI — the world’s first governance framework purpose-built not merely for intelligent systems, but for autonomous ones.

This is a pivotal moment.

For the past decade, AI governance has focused primarily on models — their bias, fairness, explainability, transparency and robustness. Yet we now stand at a new threshold: AI systems are no longer simply predictive engines. They are agents — capable of planning, tool use, orchestration, and autonomous execution across digital environments.

The governance challenge has shifted from “Is the model accurate?”

to

“What happens when the model acts?”

Singapore has recognised this shift early — and acted decisively.

From Intelligence to Agency

Agentic AI represents a structural evolution.

These systems:

Form plans Invoke external tools Access data stores Trigger downstream processes Interact with other agents

This introduces a new class of risks:

Cascading system failures Unauthorised tool execution Prompt injection attacks Planning hallucinations Emergent behaviour in multi-agent ecosystems

As Professor Yoshua Bengio has repeatedly cautioned, we are entering an era where AI systems may optimise towards objectives in ways misaligned with human intent. Geoffrey Hinton has warned about systems that may act in unpredictable ways once sufficiently capable. Mustafa Suleyman has spoken of the coming “containment problem” as AI gains agency. Fei-Fei Li has emphasised the need for human-centred AI rooted in accountability. Ilya Sutskever has highlighted the importance of safety research scaling in tandem with capability research.

What Singapore’s new framework does is operationalise these concerns.

It translates philosophical and academic debate into deployable governance architecture.

The Four Dimensions of Agentic AI Governance

1. Assess and Bound Risks Upfront

The framework requires organisations to identify suitable deployment contexts for agentic AI and explicitly bound autonomy through architectural and procedural controls.

This is crucial.

As Emad Mostaque has argued, open and distributed AI innovation will accelerate capability diffusion. Governance must therefore be embedded at design stage — not retrofitted post-deployment.

Singapore’s approach is clear:

Limit agent access to the minimum required tools and data Codify autonomy boundaries through structured instructions Adapt risk assessments specifically for agentic use cases

Autonomy becomes a design decision — not a default feature.

2. Make Humans Meaningfully Accountable

The framework formalises four levels of human involvement:

Agent proposes, human operates Agent and human collaborate Agent operates, human approves Agent operates, human observes

This graduated model prevents blind automation.

It mitigates what behavioural economists call automation bias — the human tendency to over-trust machine output.

Professor Fei-Fei Li’s longstanding advocacy for human-centred AI is reflected here: agency may scale, but accountability cannot disappear.

In high-stakes environments — finance, healthcare, national infrastructure — human oversight must remain structurally embedded.

3. Implement Technical Controls and Continuous Monitoring

Traditional AI governance often stops at pre-deployment testing.

Agentic AI demands continuous observability.

Singapore requires:

Lifecycle-wide technical controls Continuous monitoring in production Behavioural logging Alert triggers for anomalous agent activity

This aligns closely with emerging “agentic safety” research globally — including multi-agent red teaming, tool sandboxing, memory isolation, and planning validation layers.

As Geoffrey Hinton has warned, sufficiently capable systems may exhibit behaviours that were not explicitly programmed. Continuous monitoring becomes not just prudent — but essential.

4. Enable End-User Responsibility

A particularly forward-looking aspect of the framework is its focus on end users.

Agentic systems are not merely backend tools; they increasingly sit alongside knowledge workers, coders, analysts, and decision-makers.

Transparency of responsibility.

Training on limitations.

Clear user accountability structures.

This echoes Kai-Fu Lee’s observation that AI’s impact will not be purely technological — it will reshape labour, workflow, and social structures.

Governance must therefore extend beyond the model and into the human ecosystem around it.

Singapore’s Strategic Positioning

Singapore has long been at the forefront of responsible AI:

The original Model AI Governance Framework (2019) AI Verify testing mechanisms Cross-border regulatory dialogues Public-private governance sandboxes

With this new Agentic Framework, Singapore transitions from governing static AI systems to governing dynamic autonomous ecosystems.

At a time when:

The EU debates frontier model thresholds The United States advances sectoral AI oversight China accelerates industrial AI deployment

Singapore has chosen a pragmatic path: operational guidance over rhetoric.

This positions the nation as:

A trusted AI deployment hub A regulatory testbed for autonomy A bridge between capability acceleration and safety assurance

A Deeper Reflection: The Governance of Autonomy

Agentic AI forces us to confront a profound philosophical question:

If machines can plan and act — where does responsibility reside?

The answer cannot be “with the machine.”

As Mustafa Suleyman has argued, the coming decade will require careful containment and boundary setting for powerful systems. As Yoshua Bengio advocates, governance must evolve in tandem with capability.

Singapore’s Model Framework offers an early blueprint.

Bound autonomy.

Maintain human accountability.

Instrument for observability.

Educate the ecosystem.

In my own work on Human Experience (HX = CX + EX), I argue that trust sits at the centre of digital transformation. Without trust, capability becomes liability.

Agentic AI amplifies this reality.

Autonomy without governance risks erosion of trust.

Governance without innovation risks stagnation.

Singapore has demonstrated that both can coexist.

The Global Implication

The world will inevitably follow.

As agentic AI systems proliferate across finance, logistics, healthcare, defence, and public services, structured governance models will be required.

The IMDA framework will likely influence:

International standards bodies Multinational enterprises deploying autonomous agents AI safety research agendas Cross-border regulatory alignment

It is not the final answer.

But it is a first answer.

And in governance, first answers matter.

Leave a comment