Rewiring the Enterprise with AI Planning, Reflection, and Collaboration

By Dr Luke Soon, Partner at PwC | AI Ethicist | Author of Genesis

“Agentic AI is not just an upgrade—it’s a redefinition of how organisations think, reason, and act through machines.”

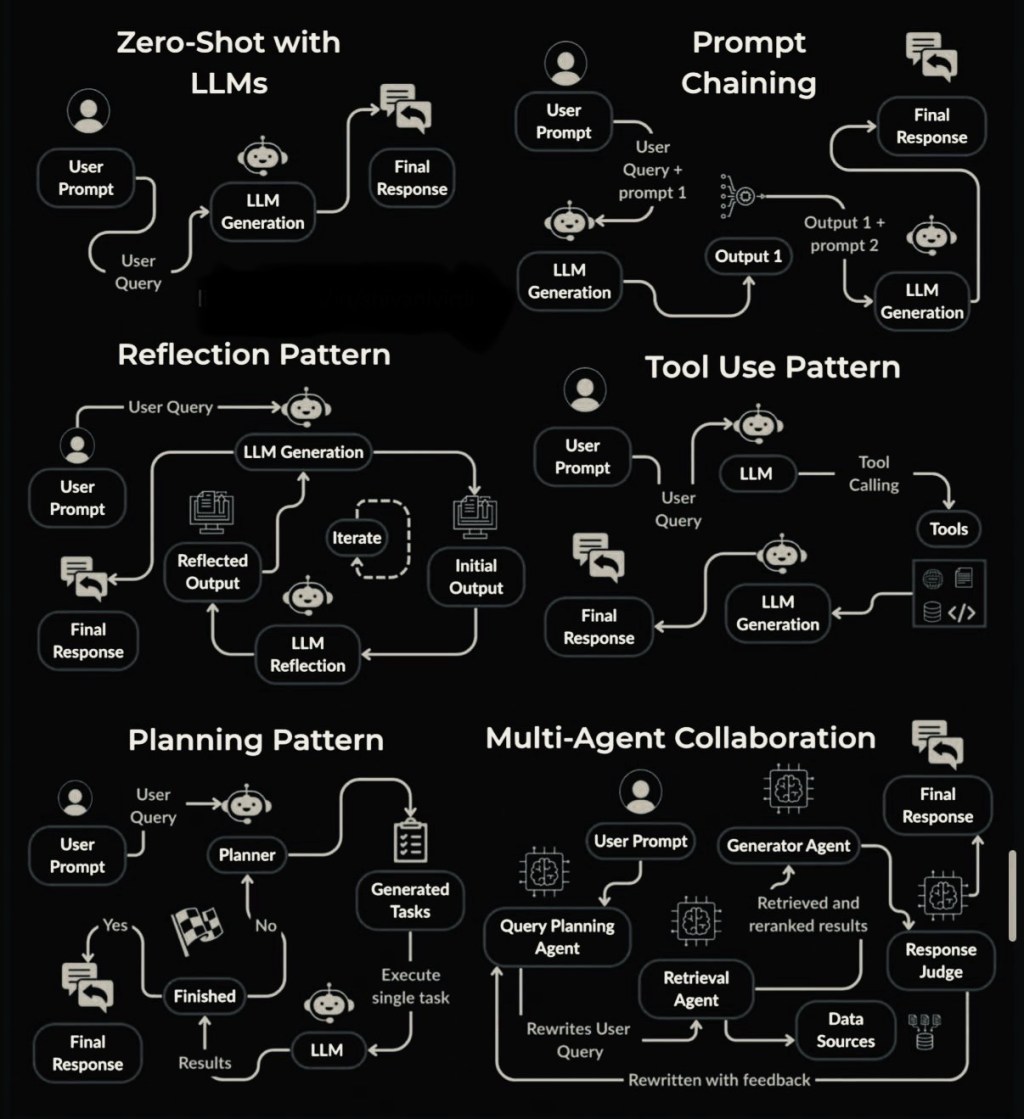

As enterprise leaders, we often talk about scaling GenAI, embedding trust, and achieving intelligent automation. But what most don’t realise is that the real breakthrough lies in agentic patterns—the evolving architectural motifs that transform isolated prompts into goal-directed, autonomous behaviours. The image above, originally shared by Shivani Virdi, elegantly lays out six foundational agentic AI patterns—from zero-shot LLMs to sophisticated multi-agent ecosystems. These aren’t just theoretical. They are already transforming enterprise operations, customer journeys, and strategic decision-making.

Let’s decode them, one by one—through the lens of applied strategy, PwC’s AI research, and my own work on HX (Human Experience) and AI governance.

1️⃣ Zero-Shot with LLMs: The Baseline

At the heart of most AI deployments today is a simple yet powerful structure: a user prompt directly passed to a large language model (LLM), returning a final response. This is Zero-Shot Inference, used widely across knowledge workers, marketing teams, and customer service.

📊 PwC’s 2024 Global AI Jobs Barometer showed that over 70% of white-collar professionals are now using zero-shot LLM tools for summarisation, email drafting, and ideation.

Yet while this is fast and intuitive, it’s also inherently stateless. There is no memory, no strategy, no planning. It’s reactive, not agentic.

2️⃣ Prompt Chaining: Sequential Reasoning

Prompt chaining involves decomposing a complex query into sequential outputs—each building on the last. This adds causal logic and structured thought.

💡 Example: In customer support, Output 1 may classify the issue; Output 2 generates the resolution script.

From a design perspective, this begins to mirror traditional process pipelines—but now reimagined with LLM cognition.

🔍 PwC research in financial services shows that prompt chaining reduced response time by 30-40% in complex client onboarding and regulatory checks when deployed with RAG-enhanced models.

3️⃣ Reflection Pattern: Metacognitive AI

Inspired by how humans revise their thoughts, this pattern loops the LLM’s output back into a reflection stage. The system critiques and re-generates the response iteratively. It is a key element in self-improving AI.

This is especially critical in safety-sensitive domains.

🧠 In my recent blog “Guardians of Autonomy: Risk-Proofing the Agentic AI Frontier”, I argue that reflection loops help close the gap between intent and impact, forming the foundation for trusted autonomy.

This aligns with findings from PwC’s Responsible AI Toolkit, where use of reflection reduced hallucination rates by up to 57% in test environments across healthcare and legal sectors.

4️⃣ Tool Use Pattern: Augmenting Reasoning with Actions

Beyond language, agentic systems increasingly call external tools—calculators, code runners, APIs, and databases—to answer user queries.

This is a step toward tool-augmented cognition.

In enterprise use cases, it allows agents to:

Pull real-time inventory Run simulations Trigger automation scripts Query SQL warehouses

PwC’s internal testing within audit and tax functions showed that LLMs using code execution tools reduced manual effort by up to 45%, while increasing consistency of complex regulatory calculations.

5️⃣ Planning Pattern: Agent as Orchestrator

Here, a Planner agent receives the user query, breaks it down into discrete tasks, and executes them sequentially. This is where agentic systems start demonstrating multi-step reasoning and goal-oriented execution.

🛠️ Applications include:

Market research agents Legal document review Portfolio rebalancing in wealth management

This also introduces new risks—bias in task decomposition, ungoverned execution flows, or “planning drift.” I addressed this in my article on Agentic AI Safety, highlighting the need for agent supervision, outcome auditability, and ethical guardrails.

6️⃣ Multi-Agent Collaboration: Orchestrated Intelligence

The most advanced form involves distributed collaboration between specialist agents—planners, retrievers, generators, judges—working in concert, often with real-time feedback loops and environment sensing.

It mirrors corporate departments working together:

Query Agent = strategy consultant Generator = analyst Retrieval = researcher Judge = executive reviewer

🧩 This is the blueprint for what I call “AI Operating Models”—where enterprises deploy agent teams to replace or augment full workflows.

📉 In pilot deployments at PwC, such multi-agent systems led to task cost reductions of 60–70%, especially in deal diligence and risk assessments.

Why This Matters: Trust, Safety, and the Future of HX

Agentic AI represents a shift from tool-use AI to decision-making AI. The difference isn’t just in architecture—it’s in consequence.

As I’ve argued in Genesis: Human Experience in the Age of AI, the more autonomous our systems become, the more intentional we must be with their design, governance, and integration with human values.

Key considerations:

🧭 Governance: How do we audit multi-agent actions? ⚖️ Ethics: How do we prevent agentic drift from original user intent? 🤝 HX Design: How do agents preserve empathy, dignity, and fairness?

As you explore these six patterns in your own organisation, I urge you to ask not just “Can it be done?” but “Should it be done—and who will be accountable when it is?”

Final Thoughts

We are at the dawn of a new enterprise intelligence stack—powered by agents, planning, reasoning, and dynamic cooperation. The six patterns above are not static blueprints—they are evolving grammars of machine thought. Understanding and mastering them is essential for any leader shaping the future of work, trust, and value.

📚 Continue the conversation via my full series on Agentic AI Safety, Architecture, and Human Experience on LinkedIn.

Leave a comment